Artificial intelligence and advanced analytics are often discussed as transformative technologies, but their success depends on something far more fundamental: reliable access to high-quality data. Without consistent and automated data flows, even the most advanced models fail to deliver meaningful results.

Many organisations struggle at this stage. Data is collected across multiple platforms—CRMs, operational systems, marketing tools, and third-party services—each operating in isolation. When these systems are connected manually, latency and inconsistency become unavoidable.

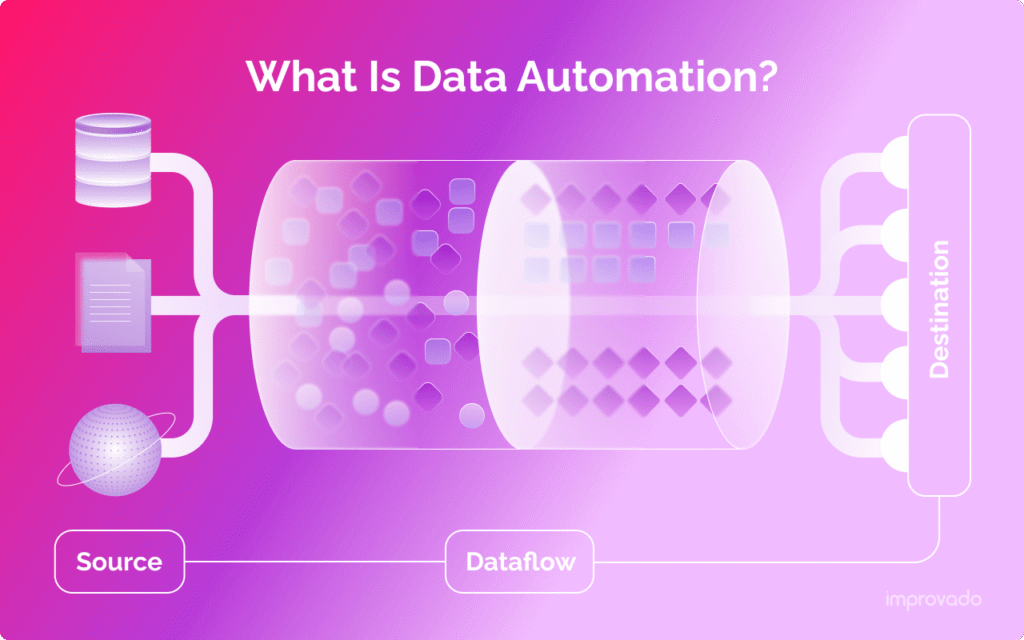

Implementing data automation addresses this challenge by standardising how information is collected, transformed, and delivered across the organisation. Automated processes ensure that analytics teams and AI systems are always working with accurate, up-to-date datasets, reducing the risk of biased or misleading outcomes.

From a governance perspective, automation also improves compliance and auditability. Clearly defined workflows make it easier to track where data originates, how it is processed, and who has access to it—an increasingly important requirement as data regulations continue to tighten globally.

Modern data pipelines are no longer limited to batch processing or overnight updates. They support near real-time ingestion and transformation, enabling faster insights and more responsive systems. This capability is particularly critical for applications such as predictive analytics, customer personalisation, and operational monitoring.

As organisations continue to invest in AI-driven initiatives, those that prioritise automated data foundations will see faster returns and fewer roadblocks. In contrast, businesses that overlook this layer often find themselves constrained—not by ambition, but by infrastructure.